Meta has launched Llama 3, the successor to Llama 2, and according to benchmarks, the smaller variant of Llama 3 already outperforms the largest variant of Llama 2. As usual, the release features both pre-trained and instruction-fine-tuned language models, and there are two sizes as of now: the Llama3-8B and Llama3-70B.

To build this model, Meta created a new human evaluation set, which contained 1,800 prompts covering key use cases like asking for advice, or brainstorming, classification, closed question-answering, coding, creative writing, extraction, inhabiting a character/persona, open question-answering, reasoning, rewriting, and summarization.

The fact that it has been designed for performance in real-world scenarios is what excites us the most. As is custom at Superteams.ai, we decided to take the model for a spin right after launch.

In this article, we will show the step-by-step process of how to use the open model Llama3-8B. We will start with deployment of the model on a GPU node, and then query it. Once that is done, we will follow it up with an advanced RAG pipeline, built using the model, to show how enterprises can deploy and use it on their own documents for a range of use cases.

Let’s first understand why the model is such a big deal.

Understanding Llama 3

Llama 3 models are fully open-source including model weights, so businesses, researchers or developers incur no cost for access and integration. They are already available across major platforms like AWS and Google Cloud.

This is incredible because it opens up possibilities for a range of use-cases that emerge when models are released open-source, and also reaffirms Meta’s stance around AI. Here are some technical details about the models:

Model Info

- They have a default 8k token context window.

- They outperform other open-source models of their scale like Gemma 7B or Mistral8x22B with a MMLU over 80.

- They have improved reasoning capabilities thanks to an increased focus on coding datasets.

Model Training and Data

- The models have been trained on over 15 trillion tokens from publicly available sources.

- They incorporate a 128K token vocabulary tokenizer.

- Training process utilized advanced data-filtering pipelines for optimal data quality.

- They were trained for efficiency with over 400 TFLOPS per GPU on 16K GPUs.

Performance Benchmarks

- MMLU: 8B model scores 68.4; 70B model achieves 82.0.

- HumanEval: 8B at 62.2; 70B reaches 81.7.

- GSM-8K: 79.6 for 8B; 70B model leads with 93.0.

- MATH dataset: 30.0 for 8B; 70B model scores 50.4.

Now, let’s put the model through some real world tests.

Prerequisites

As Llama 3 is a gated repository, you will need to apply for access from Meta via Hugging Face. To do that, visit:

https://huggingface.co/meta-llama/Meta-Llama-3-8B

Once you accept the terms and conditions and apply with your details, you will have to wait before repo owners from Meta grant access to you. We got it within 15 minutes.

Installing Llama3-8B

Now that we have access to the model sorted, let’s test the model. We will use Ollama to test this out.

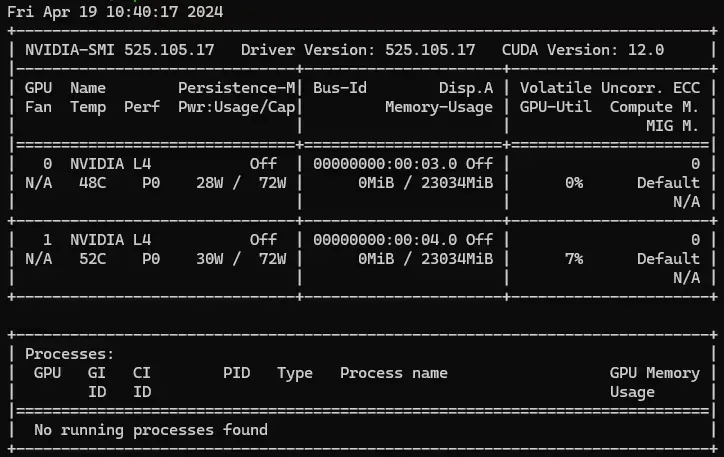

First, we will launch a GPU node. We used 2xL4 on GCP. To test your configuration and to see if you have drivers installed properly, you can run

$ nvidia-smi

Next, let’s test the model.

To do that, follow these steps (we will assume you have a virtual environment or conda environment already set up):

(base) soum@superteams-v100:~$ curl -fsSL https://ollama.com/install.sh | sh

>>> Downloading ollama...

######################################################################## 100.0%##O#-#

######################################################################## 100.0%

>>> Installing ollama to /usr/local/bin...

>>> Creating ollama user...

>>> Adding ollama user to render group...

>>> Adding ollama user to video group...

>>> Adding current user to ollama group...

>>> Creating ollama systemd service...

>>> Enabling and starting ollama service...

Created symlink /etc/systemd/system/default.target.wants/ollama.service → /etc/systemd/system/ollama.service.

>>> NVIDIA GPU installed.Now that we have Ollama installed, we can simply do ‘ollama pull llama3’:

(base) soum@superteams-v100:~$ ollama pull llama3

pulling manifest

pulling 00e1317cbf74... 100% ▕███████████████████████████████████████████████████████████████████▏ 4.7 GB

pulling 4fa551d4f938... 100% ▕███████████████████████████████████████████████████████████████████▏ 12 KB

pulling 8ab4849b038c... 100% ▕███████████████████████████████████████████████████████████████████▏ 254 B

pulling c0aac7c7f00d... 100% ▕███████████████████████████████████████████████████████████████████▏ 128 B

pulling db46ef36ef0b... 100% ▕███████████████████████████████████████████████████████████████████▏ 483 B

verifying sha256 digest

writing manifest

removing any unused layers

successNext, let’s test if we are able to query the model:

(base) soum@superteams-v100:~$ ollama run llama3

>>> What is your name?

I don't have a personal name. I'm an AI designed to assist and communicate with humans, so I don't have a personal identity

or a physical presence. My purpose is to provide information, answer questions, and help users like you with their queries,

without having a personal agenda or a specific personality. You can think of me as a helpful tool or a knowledgeable

companion!General Knowledge

>>> Where is the Eiffel tower?

The Eiffel Tower is located in Paris, France. It's one of the most iconic landmarks in the world and a symbol of French

culture and engineering. The exact address is:

5 Avenue Anatole France,

75007 Paris,

France

The Eiffel Tower is situated on the Champ de Mars, a public park in the 7th arrondissement (district) of Paris. It's easily

accessible by public transportation or on foot from many parts of the city.Math Reasoning

>>> Can you compute this: (4x4)x8

To evaluate this expression, I'll follow the order of operations (PEMDAS):

1. Evaluate the expression inside the parentheses: 4x4 = 16

2. Multiply the result by 8: 16 × 8 = 128

So, (4x4) × 8 = 128!

>>> Can you calculate the value of z where x = 24, y = 58, z = x^2 + y^2 / (y - x)

To calculate the value of z, we can start by simplifying the expression:

z = x^2 + y^2 / (y - x)

We're given that:

x = 24

y = 58

So, let's substitute these values into the equation:

z = (24)^2 + (58)^2 / (58 - 24)

= 576 + 3364 / 34

= 576 + 100

Now, we can simplify the expression further:

z = 576 + 100

= 676

Therefore, the value of z is:

z = 676Code Generation

>>> Can you write a Tailwind code for a frontend that looks like Netflix?

What a great request!

To create a Tailwind CSS code inspired by Netflix, I'll focus on the main layout and design elements. Please note that this is not an exact replica of the Netflix UI, but rather an interpretation with a similar aesthetic.

Here's the code:

```html

<!-- HTML Structure -->

<div class="h-screen bg-gray-900">

<!-- Header Section -->

<header class="fixed top-0 left-0 right-0 bg-black h-20 flex justify-center items-center text-white font-bold">

<nav>

<ul class="flex space-x-4">

<li><a href="#" class="text-white hover:text-gray-400">Home</a></li>

<li><a href="#" class="text-white hover:text-gray-400">TV Shows</a></li>

<li><a href="#" class="text-white hover:text-gray-400">Movies</a></li>

</ul>

</nav>

</header>

<!-- Main Content Section -->

<main class="mt-20 mx-auto w-full h-screen max-w-md">

<!-- Hero Section -->

<section class="flex flex-col justify-center items-center h-screen bg-cover" style="background-image:

url('https://picsum.photos/2000/1000')">

<h1 class="text-white font-bold text-4xl">Binge-Worthy Content</h1>

<p class="text-gray-400 text-lg">Explore the latest shows and movies!</p>

</section>

<!-- Grid Layout for Cards -->

<div class="grid grid-cols-2 gap-8 mt-20">

<div class="bg-white h-screen flex justify-center items-center">

<img src="https://picsum.photos/400/200" alt="Card Image" class="h-full w-full object-cover">

<p class="text-gray-600 text-lg">Show Name</p>

</div>

<!-- Repeat the grid pattern for more cards -->

</div>

</main>

<!-- Footer Section -->

<footer class="bg-black h-20 flex justify-center items-center text-white font-bold mt-auto">

<p>© Netflix</p>

</footer>

</div>

```

This code uses Tailwind's utility-first approach, focusing on the structure and layout. I've included some basic styles to

give you an idea of how the design might look.

**Key elements:**

1. **Header**: A fixed top header with a black background, containing a navigation menu.

2. **Main Content**: The main section is divided into two parts: a hero section for showcasing a prominent image or message,

and a grid layout for displaying cards (show/movies).

3. **Grid Cards**: Each card contains an image and some text, using the `bg-white`, `h-screen`, `flex`, and `justify-center`

utilities to create a responsive design.

4. **Footer**: A simple black footer with copyright information.

Feel free to customize this code by modifying the utility classes or adding your own styles to create a more unique look.

Remember, this is just an interpretation of the Netflix UI, and you may want to adjust it to fit your specific needs. Cypher Schema Understanding and Generation

>>> I need to generate Cypher insertion queries from this following text to build a knowledge graph. Here is the text: Minority Report is a 2002 American science fiction action film[6] directed by Steven Spielberg, loosely based on Philip K. Dick's 1956 novella "The Minority Report". The film takes place in the Washington metropolitan area in the year 2054, in which a specialized police department—Precrime—apprehends criminals by use of foreknowledge provided by three psychics called "precogs". The cast stars Tom Cruise as Precrime chief John Anderton, Colin Farrell as Department of Justice agent Danny Witwer, Samantha Morton as precog Agatha Lively, and Max von Sydow as Precrime director Lamar Burgess.

Here are the Cypher insertion queries to build a knowledge graph based on the text:

**Create nodes**

```cypher

CREATE (minority_report:Movie {title: "Minority Report", year: 2002})

CREATE (steven_spielberg:Director {name: "Steven Spielberg"})

CREATE (philip_k_dick:Author {name: "Philip K. Dick"})

CREATE (tom_cruise:Actor {name: "Tom Cruise"})

CREATE (colin_farrell:Actor {name: "Colin Farrell"})

CREATE (samantha_morton:Actor {name: "Samantha Morton"})

CREATE (max_von_sydow:Actor {name: "Max von Sydow"})

CREATE (washington_metropolitan_area:Location {name: "Washington metropolitan area", city: "Washington"})

CREATE (precime_police_department:Organization {name: "Precrime police department", type: "Police Department"})

CREATE (john_anderton:Person {name: "John Anderton"})

CREATE (danny_witwer:Person {name: "Danny Witwer"})

CREATE (agatha_lively:Person {name: "Agatha Lively"})

CREATE (lamar_burgess:Person {name: "Lamar Burgess"})

CREATE (department_of_justice:Organization {name: "Department of Justice", type: "Government Agency"})

```

**Create relationships**

```cypher

MATCH (minority_report:Movie), (steven_spielberg:Director) WHERE minority_report.title = "Minority Report" AND

steven_spielberg.name = "Steven Spielberg"

CREATE (minority_report)-[:DIRECTED_BY]->(steven_spielberg)

MATCH (philip_k_dick:Author), (novella:Book {title: "The Minority Report"}) WHERE philip_k_dick.name = "Philip K. Dick" AND

novella.title = "The Minority Report"

CREATE (philip_k_dick)-[:WRITTEN]->(novella)Our first impression? The model performs incredibly well on a range of tasks and has the potential to become the de facto model for a range of use-cases.

Now, we will test the model for an advanced RAG application that we will build using Llama3-8B, Haystack and Qdrant Vector Search.

RAG Using Llama3-8B

An LLM like Llama 3 has been trained on public data. Therefore, while it is useful for a range of use-cases that are around general purpose AI, enterprise-specific applications require us to go one step further.

An LLM is useful for an enterprise application only when it is able to leverage the company’s internal knowledge base. Therefore, we will now create an application architecture called RAG, or Retrieval Augmented Generation, that allows the LLM to harness your private data for its responses. This is where it starts becoming truly powerful.

To demonstrate the power of RAG applications, we will harness Llama3-8B to build a customer reviews analyzer. We will use a dataset from Hugging Face, which contains financial news, convert that data into embeddings, insert that into a vector store, and use LLMs to understand that data.

An application like the above can be extremely helpful for tracking financial information and building domain-specific search, or an AI analyst.

Let’s start.

For this particular RAG pipeline, we will use an advanced orchestration framework known as Haystack. Haystack by Deepset.ai is extremely powerful and helps visualize the entire AI workflow as pipelines. We’ll see this as we go along.

We will also use Qdrant, a top vector search engine, for storing document embeddings and search.

First, install the required libraries. We have created a requirements.txt file with the following content:

!pip install -q haystack-ai

!pip install -q ollama-haystack

!pip install -q "datasets>=2.6.1"

!pip install -q "sentence-transformers>=2.2.0"

!pip install -q qdrant-haystackYou can install the dependencies using:

(base) soum@superteams-v100:~/workspace/llama3-rag$ pip install -r requirements.txt

Once the installation is complete, you can get to coding. Let’s first build our ingestion function, which will convert csv data into embeddings, and store it into the Qdrant Vector Store.

Prerequisite: Installing Qdrant

Installing Qdrant locally is very simple:

docker pull qdrant/qdrant

docker run -p 6333:6333 qdrant/qdrant

This starts the Qdrant server on http://localhost:6333

Storing in the Vector Store

Let’s import the relevant libraries.

from haystack import Pipeline

from haystack.document_stores.types import DuplicatePolicy

from haystack.components.builders.prompt_builder import PromptBuilder

from haystack_integrations.components.generators.ollama import OllamaGenerator

from haystack_integrations.components.retrievers.qdrant import QdrantEmbeddingRetriever

from haystack_integrations.document_stores.qdrant import QdrantDocumentStore

from haystack.components.embedders import SentenceTransformersTextEmbedder, SentenceTransformersDocumentEmbedder

from datasets import load_dataset

from haystack import DocumentNext, let’s initialize the document store that we will use across our application:

document_store = QdrantDocumentStore(

url=”http://localhost:6333”,

recreate_index=True,

return_embedding=True,

wait_result_from_api=True,

)We are using our local Qdrant installation. Now let’s load the dataset from Hugging Face, convert them to embeddings, and then store them into the document_store.

dataset = load_dataset("PaulAdversarial/all_news_finance_sm_1h2023", split="train")

documents = [Document(content=doc["title"]) for doc in dataset]

document_embedder = SentenceTransformersDocumentEmbedder()

document_embedder.warm_up()

documents_with_embeddings = document_embedder.run(documents)

document_store.write_documents(documents_with_embeddings.get("documents"),policy=DuplicatePolicy.OVERWRITE)Next, let’s set up our retriever.

retriever = QdrantEmbeddingRetriever(document_store=document_store)

Now, let’s set up our prompt template. We are going to provide the documents retrieved as part of the context, and then pose the question to the LLM.

template = """

Given only the following information, answer the question.

Ignore your own knowledge.

Context:

{% for document in documents %}

{{ document.content }}

{% endfor %}

Question: {{ query }}?

"""

Now, let’s stitch it all together using the Haystack pipeline. We will repurpose our Ollama installation that we used before to serve Llama 3.

pipe = Pipeline()

pipe.add_component("text_embedder", SentenceTransformersTextEmbedder())

pipe.add_component("retriever", retriever)

pipe.add_component("prompt_builder", PromptBuilder(template=template))

pipe.add_component("llm", OllamaGenerator(model="llama3", url="http://localhost:11434/api/generate"))

pipe.connect("text_embedder.embedding", "retriever.query_embedding")

pipe.connect("retriever", "prompt_builder.documents")

pipe.connect("prompt_builder", "llm")

In Haystack, the workflow is to first add components to the pipeline. Then you connect the components in the right order. Haystack also allows you to visualize your pipeline.

Finally, here’s how you query the pipeline.

query = "Give me all the news you have about IMAX"

response = pipe.run({"prompt_builder": {"query": query}, "text_embedder": {"text": query}})

print(response["llm"]["replies"])

This will throw up all the financial news about IMAX from the dataset.

Now let’s put together a Gradio-powered UI. First, let’s install Gradio.

!pip install gradioNext, let’s construct the UI and query the pipeline through the UI.

import gradio as gr

import os

gr.close_all()

with gr.Blocks(theme=gr.themes.Soft()) as demo:

gr.Markdown(

"""

# Welcome to Llama3-8B Finance RAG Chatbot by Superteams.ai!

""")

chatbot = gr.Chatbot()

msg = gr.Textbox()

clear = gr.ClearButton([msg, chatbot])

def respond(message, chat_history):

response = pipe.run({"prompt_builder": {"query": message}, "text_embedder": {"text": message}})

bot_message = f"{response['llm']['replies'][0]}"

chat_history.append((message, bot_message))

return "", chat_history

msg.submit(respond, [msg, chatbot], [msg, chatbot])

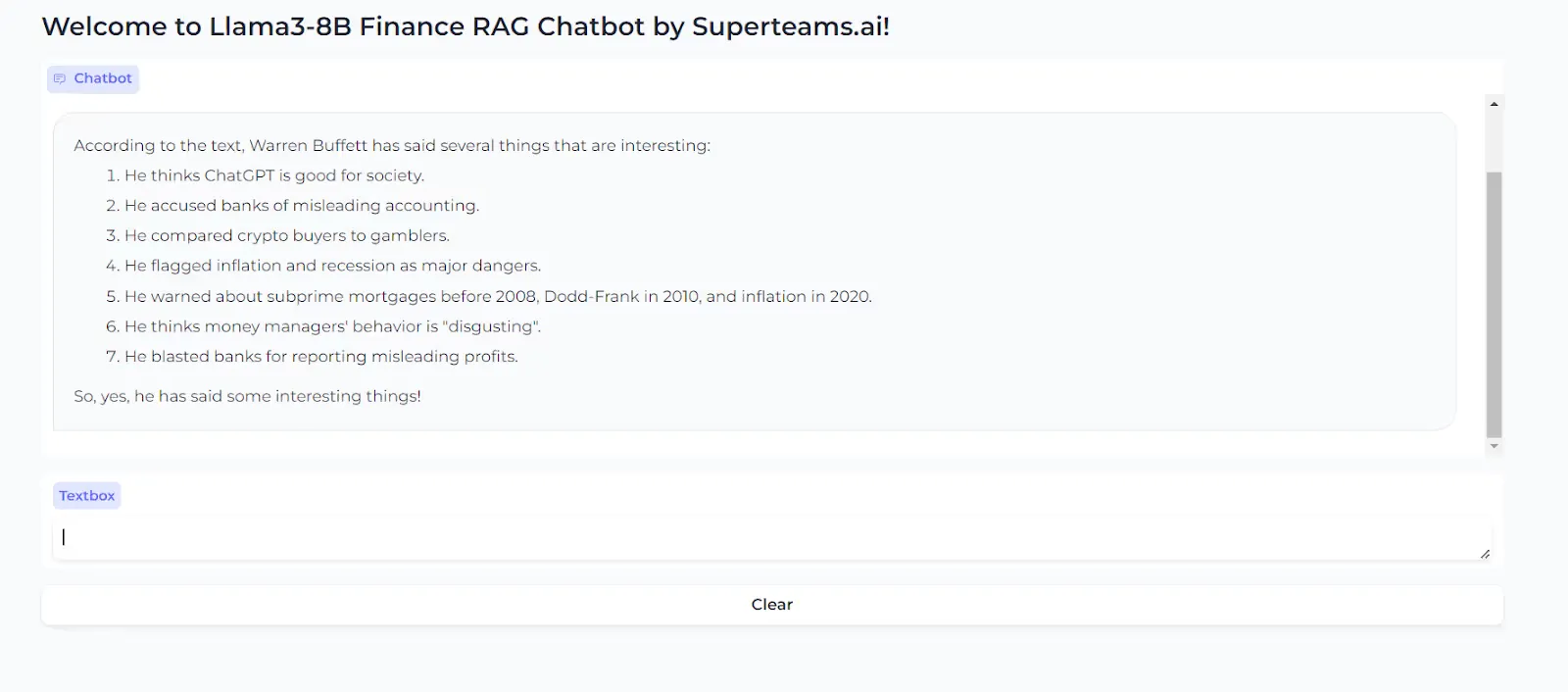

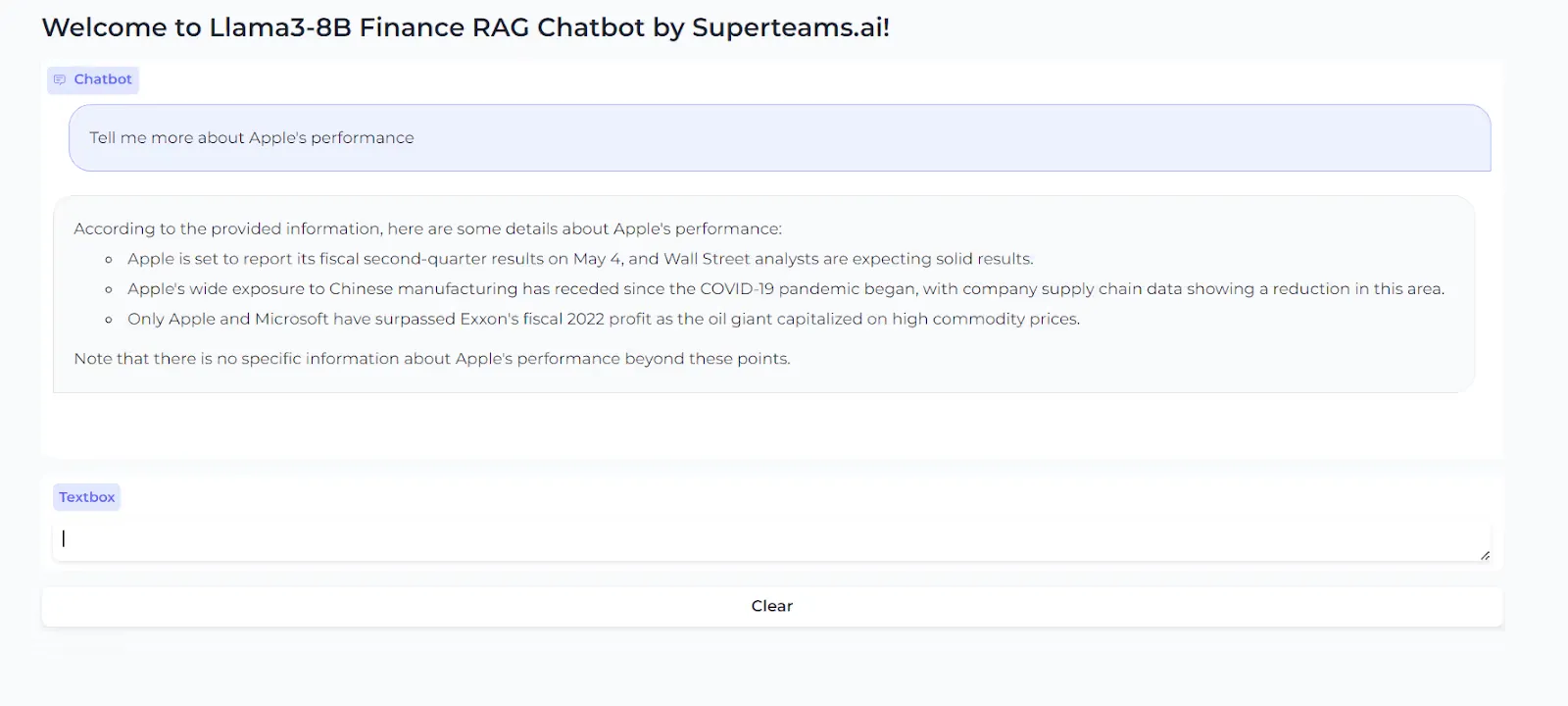

demo.launch(share=True)Great – now we have the full application stitched together. Once you run through the application, you will see a Gradio URL (both public and private) come up.

Let’s ask a few questions to this chatbot.

And one more…

That completes our guide on setting up a RAG with Llama 3. You can follow through the steps with your own data and test the results for yourself. Llama3-8B, needless to say, is our latest favorite open-source LLM.

As a next step, if you want to productionize it, consider using Ray and multiple model deployments for handling parallel requests.

Final Note

In this guide, we showcased the approach to building a RAG AI using Llama3-8B, Qdrant and Haystack by Deepset, for financial news. For enterprise deployments, we do far more sophisticated architectures, such as using ReRankers, parallelism, multitenancy and more. Feel free to reach out to us for a consultation on how Llama3-8B deployments can help your organization.

-Feature-Image.CjBFdO7y_Z1JlSjK.webp)