Google: Gemma 4 26B A4B (Instruct)

Browse Knowledge Base >Gemma 4 26B A4B is a high-efficiency Mixture-of-Experts (MoE) model released by Google DeepMind on April 3, 2026. It is part of the fourth-generation Gemma family, designed to provide "Frontier-level" intelligence with the speed and cost-profile of a much smaller model. The "A4B" designation stands for Active 4 Billion, indicating that while the model possesses ~26B total parameters, it only activates roughly 4B per token during inference.

On OpenRouter, the ":free" version offers the same underlying intelligence but is often subsidized for developer testing and rapid prototyping.

What It Is

Gemma 4 26B A4B is a natively multimodal MoE model built on the same architectural foundations as Gemini 3. It represents a strategic shift toward "Intelligence-per-Parameter" efficiency. By using a sparse architecture (8 active experts out of 128 total, plus 1 shared expert), it delivers reasoning performance comparable to the dense Gemma 4 31B but runs significantly faster and at a lower cost. It features a massive 256,000-token context window, making it one of the most capable open-weight models for long-document analysis.

What It Can Do

- Native Multimodality: Processes text, high-resolution images, and video (up to 60 seconds at 1fps) natively within the same transformer block.

- Configurable Thinking Mode: Features a dedicated "reasoning" state that can be toggled using the <|think|> token, allowing the model to perform internal chain-of-thought before outputting a final answer.

- Advanced Agentic Skills: Specifically optimized for tool use and function calling, achieving high scores on benchmarks like τ²-Bench for autonomous agent behavior.

- Complex Coding: Outperforms many larger models in code generation and refactoring, particularly in Python, Rust, and Go.

- Document Understanding: Natively handles PDF parsing, UI/Screen understanding, and complex chart comprehension without needing external OCR tools.

Examples of Its Capabilities

In Visual Programming, you can upload a video of a software bug occurring in a web app. Gemma 4 26B A4B can "watch" the video, identify the exact UI element that is malfunctioning, and then—using its 256K context window to read your codebase—propose the specific code fix.

In Legal/Research Workflows, it can ingest multiple 50-page contracts simultaneously. You can then ask it to "identify any conflicting indemnity clauses across these four documents." The model will use its Thinking Mode to cross-reference the clauses and provide a structured summary with citations, all while utilizing only a fraction of the compute required by a dense 70B model.

How Does It Work?

The model utilizes a Sparse Mixture-of-Experts (MoE) architecture. Instead of passing every piece of data through every parameter, a "router" sends each token to the most relevant "experts" (sub-networks) for that specific task.

- MTP (Multi-Token Prediction): It predicts several tokens in parallel to increase generation speed.

- Sliding Window Attention: Uses a 1024-token sliding window to maintain high performance without the quadratic memory costs typically associated with 256K contexts.

- Native Vision Encoder: A 550M parameter vision encoder is fused directly into the language backbone, allowing for seamless interleaved text and image prompts.

Applications of Gemma 4 26B A4B

- Autonomous AI Agents: Powering "always-on" assistants (like OpenClaw or Hermes) that require low-latency reasoning and tool use.

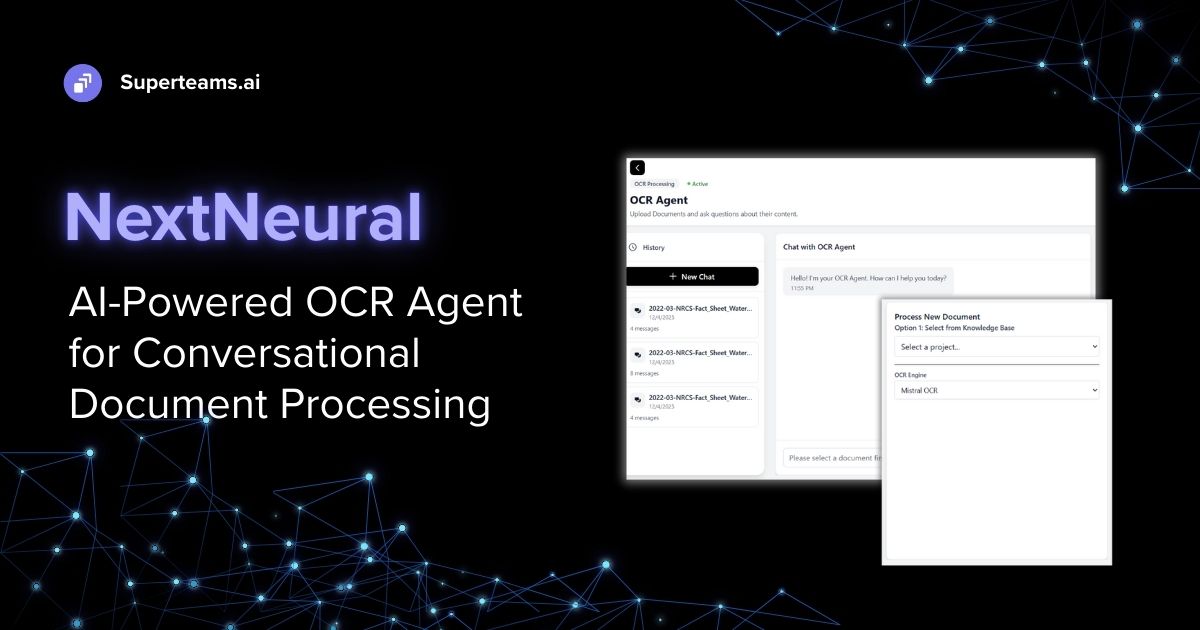

- Multi-Page OCR & Analysis: Automating the digitization of complex financial statements, medical records, or hand-written notes.

- On-Premise Engineering: Since it only activates 4B parameters, it is a prime candidate for deployment on single consumer GPUs (like an RTX 5090) while maintaining professional-grade output.

- Multilingual Translation: Providing high-accuracy translation and localization across 140+ languages.

Previous Models

- Gemma 4 31B (April 2026): The dense counterpart to the 26B A4B, offering slightly more stable reasoning but at a higher computational cost.

- Gemma 3 (2025): The first Gemma family to introduce multimodal support, though with a smaller 128K context window.

- Gemma 2 (2024): The landmark series that introduced the "distillation from Gemini" technique, setting the standard for open-weight efficiency.